NNadir

NNadir's JournalMeet General Shawn Harris, the Democrat who seeks to oust Marjorie Taylor Greene

Meet Shawn Harris, the Democrat who seeks to oust Marjorie Taylor GreeneHe connected with the Washington Blade last week to discuss his candidacy as a Democrat in Georgia’s deep-red 14th Congressional District — and why his promise to deliver for constituents who have been failed by their current representative is resonating with voters across the political spectrum...

... “I was military and I was high-level military,” he said. “So, it’s very easy for Republicans to Google my name and look at my history. My last assignment was in Israel. So that was a very high-level position.”

“The second piece,” Harris said, “is I raise cattle. I raise Red Angus cattle. I’m actually in my office looking out the window at them right now.”

He noted that agriculture dominates Georgia’s economy, particularly “cattle and wineries,” and also said he is an active member of the Georgia Cattlemen’s Association and U.S. Cattlemen’s Association.

“Most cattlemen, at least here in this area, are Republicans,” Harris said, so during the group’s meetings, “they get a chance to meet me just as Shawn, just as another cattleman.” At the same time, he said, “they come out here and visit me on the farm” and vice-versa...

I definitely hope this guy has a serious shot. That awful woman needs to be gone. She's a traitor, a fool, and an embarrassment to our country.

Formal "Justification" for a Fast Lead Cooled Nuclear Reactor Sought in the UK.

Justification sought for use of Newcleo reactor in the UKSubtitle:

Excerpts:

...The NIA noted that a justification decision is one of the required steps for the operation of a new nuclear technology in the UK, but it is not a permit or licence that allows a specific project to go ahead. "Instead, it is a generic decision based on a high-level evaluation of the potential benefits and detriments of the proposed new nuclear practice as a pre-cursor to future regulatory processes," it added...

..."Advanced reactors like Newcleo's lead-cooled fast reactor design have enormous potential to support the UK's energy security and net-zero transition, so we were delighted to apply for this decision," said NIA Chief Executive Tom Greatrex. "This is an opportunity for the UK government to demonstrate that it backs advanced nuclear technologies to support a robust clean power mix and to reinvigorate the UK's proud tradition of nuclear innovation. We look forward to engaging with the government and the public throughout this process and to further applications for new nuclear designs in the future..."

..."We continue to progress our UK plans at pace - aiming to deliver our first of a kind commercial reactor in the UK by 2033. We are but one player in the new nuclear renaissance and we look forward to working with government and the rest of the sector to develop the robust supply chain that can deliver the UK's ambition of 24 GW of nuclear power by 2050..."

24 GWe of nuclear power in Britain definitely falls into the category of "too little, too late." Hopefully the goals will morph into something more aggressive.

Things are pretty dire as I write. I routinely calculate from the data at Weekly average data for CO2 concentrations measured at Mauna Loa], setting the second derivative d2C/dt2 equal to the difference between the running 52 week average for the rate of CO2 at the current date (as of this writing 24.87 ppm/10 years) with that of the value in the first week of 2000 (15.36 ppm/10 years). I then integrate twice and substitute the boundary conditions, the concentration of CO2 reported this week, and the current running average for 10 year increases to obtain a quadratic. If the fossil fuel indulgent antinuke rhetoric continues to triumph, the resulting quadratic (as of this week) works out to a concentration of around 519 ppm "by 2050." I expect that 24 GW of additional nuclear power in Britain would barely cover the growth in electricity demand, especially if the very dubious "electrify everything" scheme is allowed to proceed on the dubious basis driven by the difficult to break but very dangerous belief that so called "renewable energy" will be something other than useless, which won't happen.

Britain, I should note, has the world's largest supply of isolated reactor grade plutonium, and thus a lead cooled fast reactor would allow for the use of this plutonium to create more plutonium which will certainly be needed if there is to remain any slim hope of avoiding even worse climate change than we are already experiencing as the result of antinukism's grand propaganda success that has left the planet in flames.

Lead coolants are very attractive to my mind for a number of reasons connected with process intensification - providing high temperatures to meet a number of missions not merely connected to producing electricity. Historically, these types of reactors have actually operated on LBE, lead bismuth eutectics, but I note that the use of pure lead will lead to the transmutation of some lead into the far less toxic element bismuth (an ingredient in the OTC product "Pepto-Bismol" ), thus generating LBE in situ. This would involve certain technical challenges, but I believe they might well be possible to address.

Have a pleasant work week.

An Interesting Experiment in Trying to Involve Journalists in Reporting Real Science Correctly.

One of the standard half-serious jokes I often repeat in my writings here when confronting articles from journalists linked and discussed here, typically about energy and the environment which are often sensationist and misleading, is that "One cannot get a degree in journalism if one has passed a college level science course with a grade of C or better."

Journalism, as is the case in the American political disaster in which journalists have been and are actively working to normalize an intellectually deficient and declining criminal fraud as a Presidential candidate, while demeaning as "too old" an activist highly functional President, is generally horrible where scientific issues are involved. One result of this marketing of bizarre ideology by journalists is climate change. I regard the major issue in climate change to be selective attention, for example, the magnification of the views of extreme scientific minorities in climate denial, or the demonization of nuclear energy, which I continue to insist is the last, best hope for humanity.

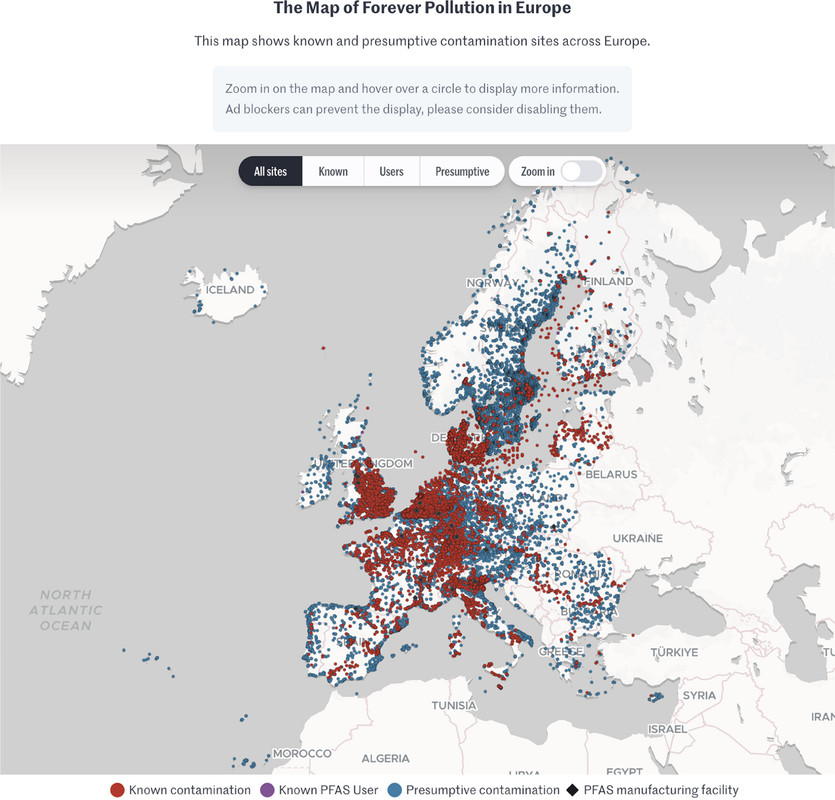

Thus it was with some interest that I came across this paper in a scientific journal in which scientists involved themselves with, and reviewed, journalism in connection with a very serious issue, PFAS (Per/Poly Fluorinated Alkylated Substances) contamination in Europe: PFAS Contamination in Europe: Generating Knowledge and Mapping Known and Likely Contamination with “Expert-Reviewed” Journalism Alissa Cordner, Phil Brown, Ian T. Cousins, Martin Scheringer, Luc Martinon, Gary Dagorn, Raphaëlle Aubert, Leana Hosea, Rachel Salvidge, Catharina Felke, Nadja Tausche, Daniel Drepper, Gianluca Liva, Ana Tudela, Antonio Delgado, Derrick Salvatore, Sarah Pilz, and Stéphane Horel Environmental Science & Technology 2024 58 (15), 6616-6627

Unfortunately I will not have much time to discuss this paper in much detail, but a few excerpts and a graphic follow.

From the introduction:

PFAS have been broadly detected in environmental media including surface water, groundwater, raw and finished drinking water, soil, air, landfill leachate, sewage sludge, food, and dust. (12?15) As examples, a recent analysis of tap water samples in the United States collected between 2016 and 2021 detected PFAS at 45% of tested locations, (16) and the 2019 French National Biomonitoring Programme measured serum levels of 17 PFAS and detected PFAS in 100% of the 993 participants. (17) It has been argued that PFAS have exceeded a “planetary boundary” of “widespread and poorly reversible risks associated even with low-level PFAS exposures” since global rainwater samples have PFAS levels above proposed regulatory limits designed to protect public health... (1)

...In addition to data gaps due to a lack of testing, the present study was motivated by two additional data gaps. First, unlike in the US where many PFAS testing datasets have been made public by federal and state governments, academic research groups, and environmental advocacy organizations, (19?22) there were very few publicly available data on PFAS contamination across the EU. Second, in most cases, legal protections for industry trade secrets and confidential business information prevent the public from knowing where PFAS are used and emitted. (23,24) An exception to this is the US Toxics Release Inventory program, which has required reporting since 2020 by some firms in a subset of industries for a small number of PFAS. (25) But in most cases, even if a specific company is known to produce and/or use PFAS, it is difficult or impossible for the public to know whether PFAS are used at individual facilities...

The authors enlisted five investigative journalists in five countries, Belgium, France, Germany, Italy, and The Netherlands, in the "FPP" or "Forever Pollution Project" and paired them with a team of PFAS scientists, noting " journalistic projects aiming at generating data in collaboration with scientists rather than reporting on already-produced data are not common."

Not common, indeed. I would expect that most scientists feel as I do, that science journalism (except generally when it appears in major science journals) that journalism is oblivious when reporting science.

Further down in the article:

The map which is interactive in the full paper, and, I think, at the link given in the caption but cannot be so here:

The caption:

I don't expect journalism to become a serious mirror of reality in my now short remaining lifetime, but we have to start somewhere; we have to try something, and I applaud this effort.

I wrote earlier today about PFAS and the very dangerous "hydrogen will save us" fantasy often hyped in the scientifically illiterate media earlier today:

A Brief Note on the Toxicology Associated With Hydrogen Fuel Cells.

Have a nice Sunday afternoon.

A Brief Note on the Toxicology Associated With Hydrogen Fuel Cells.

Intellectually, and morally, in my view, the hydrogen fuel fantasy should have been, and should be now, a non-starter, simply because of the laws of thermodynamics, notably the 2nd law. Like other "bait and switch" fantasies connected with diversion from the facts that fossil fuels can be rendered sustainable (or eliminated by wishful thinking) - including but not limited to sequestration, wind, and solar - the use of hydrogen fuels will make things worse, not better.

I laid out, in some detail, the facts connected with the nature of hydrogen as a front for the fossil fuel industry in a rather long post here: A Giant Climate Lie: When they're selling hydrogen, what they're really selling is fossil fuels.

This is also laid out in the scientific literature in the case of China, to which fossil fuel salespeople and salesbots who write here and elsewhere often appeal here to rebrand fossil fuels as "hydrogen:"

Subsidizing Grid-Based Electrolytic Hydrogen Will Increase Greenhouse Gas Emissions in Coal Dominated Power Systems Liqun Peng, Yang Guo, Shangwei Liu, Gang He, and Denise L. Mauzerall Environmental Science & Technology 2024 58 (12), 5187-5195.

One of the "bait and switch" tactics used to greenwash fossil fuels by talking about hydrogen is to talk about hydrogen fuel cells for cars. Hydrogen fuel cells actually are commercial products occasionally used for back up power for things like cell phone towers, although they are hardly mainstream.

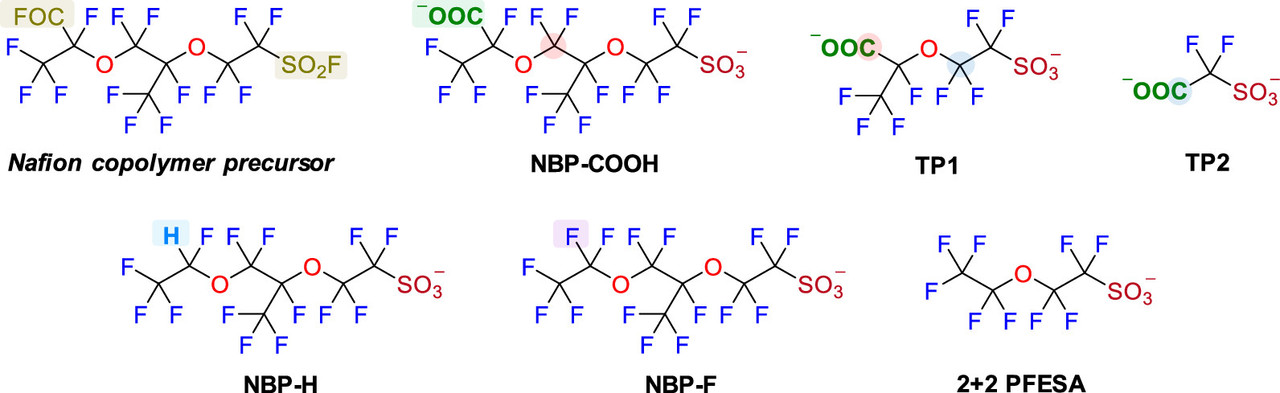

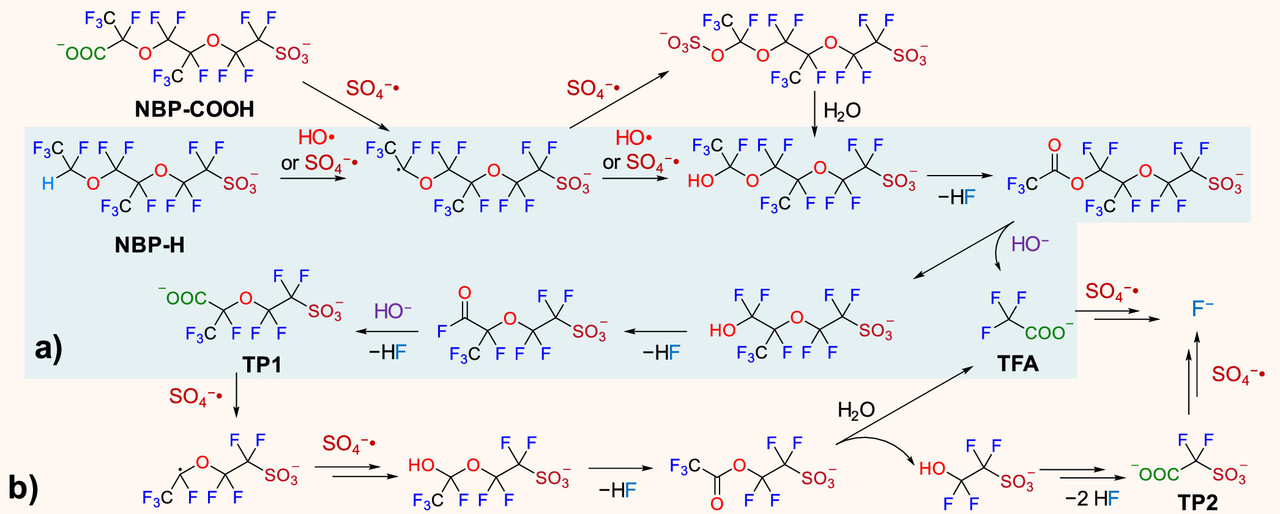

One of the major environmental risks garnering increasing attention, is the issue of PFAS - "per (and poly) fluorinated alkylate substances, so called "forever chemicals" - which are known to have profound toxicological profiles. I know, but I don't believe that most people know, that the issue of PFAS is very much connected with hydrogen fuel cells, and that the widespread use of these, for instance in cars, would make this already intractable problem far worse.

A paper on strategies to degrade these has appeared in the scientific literature in an open source paper that I came across in my general reading. It is here:

Oxidative Transformation of Nafion-Related Fluorinated Ether Sulfonates: Comparison with Legacy PFAS Structures and Opportunities of Acidic Persulfate Digestion for PFAS Precursor Analysis Zekun Liu, Bosen Jin, Dandan Rao, Michael J. Bentel, Tianchi Liu, Jinyu Gao, Yujie Men, and Jinyong Liu Environmental Science & Technology 2024 58 (14), 6415-6424

Again, the paper is open sourced, and will be generally arcane for people not familiar with scientific punctilios, but a relevant paragraph referring to fuel cells and the relationship to PFAS is this one:

Nafion, which is used in all commercial fuel cells, is the a fluoropolymer of PFAS compounds.

Two figures from the paper:

The caption:

The sulfate and hydroxide radicals in this pathway require energy to create. I regard oxidative radicals of this type as the best pathway for mineralizing (destroying) PFAS. The type of energy ideal for this purpose is ionizing radiation, high energy radiation in the UV to gamma range. The energy for doing this is best obtained from radioactive materials, the easiest access to which can be obtained from used nuclear fuels.

Have a pleasant Sunday.

That scene in "My Dinner With Andre" About a Sexy Picture, and Looking at Pictures of My Wife When She Was Young.

Last week I wrote how my wife was going through a box of her late mother's pictures, and I found myself remarking on how beautiful she looked in a picture someone took of us at the North Rim of the Grand Canyon, just before we married, a picture that somehow ended up in my mother's-in-law collection and wasn't in ours.

My wife finally got around to going through her late Mom's photo albums.

A strange feature of the picture is that in it she actually looks younger than she was at the time; she was in her early twenties but looked like a teenager. By contrast, although I had hair and it was dark, and in the picture relatively long and wild in what may have been a wind, I looked older than I actually was; I was in my early thirties. (Because we looked as if the distance in our ages appeared to be larger than it was - my wife got "proofed" in restaurants and bars into her thirties - I used to get some dirty looks from time to time, as if I were an Epsteinish/Trumpish/Gaetzish sort of pervert.)

Well, in any case, I scanned the picture, and I've looked at it a number of times since doing so over the last week, fascinated by it. As I was doing so this morning, it suddenly struck me, looking at that picture of myself, that the man in the picture, me, didn't at that time know very much at all about the world; I was ignorant, even more arrogant than I am now, kind of smug, and although I was merely formally educated, but largely unacquainted with the deeper reality of the things to which my education merely exposed me.

Then I looked at my wife in the picture, and thought about who she was at the time, the Mona Lisa type smile she had on her face concealing the fact that she'd just emerged from a very unhappy upbringing and was possessed of a deep insecurity, and uncertainly - wisely I think - unsure whether it was actually a good idea to be with me, there and then.

In that beautiful movie My Dinner With Andre there's a scene where Andre Gregory discusses a picture of his wife, Mercedes "Chiquita" Gregory. From the script:

Script, My Dinner With Andre

The first time I went away with my (then future) wife, I took her to what was my favorite place in the universe, to Big Sur. We stayed in this beautiful small cabin in a grove of redwoods, a night I have never forgotten, and about which I often muse. I consider it the best night of my somewhat pathetic life up to that point - although in the years of marriage, many similarly beautiful moments would happily entail over and over again.

In the morning after that night in the cabin, we went on a hike up a small mountain in Pfeiffer Big Sur State Park, and we came upon a grove of pin oaks, and I took pictures of her up there in that grove.

There is one of those pictures in particular that has thrilled me ever since I took it and developed it almost 40 years ago, because in the picture, my wife has exactly the same expression she was wearing the very first time I saw her, the same pose, her legs stretched out, looking down almost shyly at her feet, detached from the outer world, but seemingly deep in an inner world, a kind of impenetrable look of something that could, should perhaps, be recognized as having an aura of buried pain, a resigned sadness strangely contrasting with a sublime physicality. In the Big Sur picture as opposed to my memory of first seeing her, she is sitting on a low branch of a massive oak the Big Sur grove, naked. I must have called that picture up a thousand times, but today, I find myself asking myself if I ever really saw it, I mean, saw it for real.

Of course, when I saw my wife for the first time, I was just another of the many puerile men possessed of less than noble erotic fantasies about her looks; I had no idea who she actually was. Of course, ultimately I did get to know her, and see her divorced from any kind of vanity, somewhat distressed about the somewhat dangerous situations into which her looks had gotten her although she managed to emerge largely unscathed. (Of course early on in our friendship, she could of suspected me of being another dangerous man of the type, but happily she didn't translate those things into suspicions of me or my intentions, although she might well have done so.)

Away from all that, the attentions of puerile men including me, she was always warm, kind, generous, funny and bright, surprisingly accessible and down to Earth, although the deeper things were kept to herself. I could not help falling in love.

But the point is, that in my admiration for these pictures of her youth, like Andre Gregory confessed in his own case, it's not act of seeing, but definitely an act of not seeing anything at all.

We were younger, better looking then, but frankly and honestly, as life winds down, I prefer who we are now. We hadn't lived at all then. We didn't know anything then. We've lived now. We know something, perhaps not a lot, but something now.

Most importantly, something we know now is what it is to have lived at all. That matters.

BWXT announces nuclear manufacturing plant expansion

BWXT announces nuclear manufacturing plant expansionAn excerpt:

BWXT is headquartered in Virginia and has 14 operating sites in the United States, Canada, and the United Kingdom. Its joint ventures provide management and operations at a dozen U.S. Department of Energy and NASA facilities.

What they are saying: “Our expansion comes at a time when we’re supporting our customers in the successful execution of some of the largest clean nuclear energy projects in the world,” said John MacQuarrie, president of commercial operations for BWXT. “The global nuclear industry is increasingly being called upon to mitigate the impacts of climate change and increase energy security and independence.

Premier of Ontario Doug Ford said, “We’re thrilled to see BWXT expand its footprint and create hundreds of new jobs in Cambridge. As our province continues to lead the future of nuclear energy, the company’s investment will help provide Ontario families and businesses with access to clean, reliable, and affordable electricity for generations to come...”

I added the bold.

This won't go over well in the cults that drive the so called "renewable energy"/fossil fuel nexus, but for humanity as a whole this is a good thing, building back better the nuclear manufacturing infrastructure destroyed by fear and ignorance, thus leaving the planet in flames.

Nuclear systems can do more than provide families "with access to clean, reliable, and affordable electricity for generations to come," although there's certainly nothing wrong with that. It's nice to see people thinking about future generations, something certainly ignored by advocates of the so called "renewable energy"/fossil fuel nexus, where future generations are treated with contempt.

Personally, though, I'm opposed on thermodynamic grounds, to the "electrify everything" bad idea that's gaining unwarranted popularity, but we have to restart somewhere. (Electricity is by its nature, thermodynamically degraded.) With the planet already in flames, notably with Canada for one subset of the climate burned regions, this comes under the rubric of "too little, too late" and is hardly enough, but the world seems to be overcoming the triumph of antiscience antinukism to try to save what is left to be saved and perhaps, even restore some of what can be restored.

Ontario is rising as a center of going nuclear against climate change, something of which citizens of the Province should be proud.

Non Nuclear Portions of Wyoming's Kemmerer Nuclear Power Plant to Start Construction This Summer.

TerraPower submits Natrium construction application to the NRCExcerpts:

TerraPower purchased land in Wyoming near one of the state’s retiring coal plants to deploy its Natrium sodium fast reactor technology. Kemmerer Power Station Unit 1 would operate a 345-MW sodium-cooled reactor in conjunction with molten salt–based energy storage...

...A closer look: Natrium will use liquid sodium as a coolant instead of water. According to TerraPower, the reactor features improved fuel utilization, enhanced safety features, and a streamlined plant layout that will require fewer overall materials to construct. Because of the reactor’s storage technology, it can boost the system’s output to 500 MWe for more than five and a half hours when needed to meet additional grid demand...

... Support for the project: Natrium is one of two advanced reactor demonstration projects selected for competitive funding through the Department of Energy’s Advanced Reactor Demonstration Program. (X-energy’s Xe-100 is the other.) TerraPower received $1.6 billion in funding from the Bipartisan Infrastructure Law signed by President Biden in November 2022, which is to be used to ensure the completion of the plant. The company has also raised more than $1 billion in private funding.

Last month, TerraPower announced the second round of contracts for long-lead suppliers supporting the development of the Natrium reactor.

I'm not a sodium coolant kind of guy myself, but this reactor has features of the "breed and burn" concept which will allow it ultimately to run on depleted uranium, if I understand the design well.

Light a Candle, an Innovative Burn Up Strategy for Nuclear Reactors. (Hiroshi Sekimoto)

This will help to generate plutonium necessary if we are to have any hope of addressing climate change.

Dr. Kathryn Huff Is Leaving DOE, Praising the Administration as She Goes.

After serving her country for three years, Dr. Kathryn Huff is leaving DOE:

Kathryn Huff stepping down from DOE Nuclear Energy post

Excerpt:

“Serving in this capacity has been an unparalleled privilege, and I’m immensely grateful for the opportunity to have worked alongside you--the dedicated and talented public servants in Nuclear Energy, in DOE, and across the Biden-Harris Administration,” Huff wrote in an email announcement to colleagues last week. “I chose this timing to enable the smoothest transition back to my professorship at the University of Illinois at Urbana-Champaign where my beloved research, students, husband, and dog await...”

... “Reflecting on the past three years, I’m astonished by the tangible progress the U.S. has made in nuclear energy,” Huff said in her note to colleagues. “Reactors once destined to shut down now have a role in our 2035 and 2050 goals, new reactors are coming online, and the commercialization of advanced reactors has begun. We’re also securing the front end of the nuclear fuel cycle, restarting a consent-based approach to spent nuclear fuel management, and expanding international cooperation on peaceful nuclear technology. Perhaps most notably, the U.S. joined a groundswell of other nations in committing to triple nuclear energy capacity by 2050 (to) address the climate crisis and improve energy security.”

She added, “I will, of course, continue to contribute to the advancement of nuclear energy however I can, for as long as I can, from wherever I am.”

I understand her reasons for leaving, but I'm a little sad to see her go. She was, to my mind, a star in the Biden-Harris administration, a high powered academic scientist bringing high level expertise to government. It does seem her accomplishments in the move building back better our nuclear power infrastructure have been significant, the most significant since Steven Chu, in the Biden administration started us on the now nearly complete path to the Vogel reactors.

A Recent Thread Not Removed or Deleted Is Not Appearing In the Science Forum Titles.

It refers to an article in a scientific journal.

The post is this one: Nature Energy: An Estimate of the Death Toll Associated with a US Nuclear Power Phase Out.

Oddly it seemed to have received one of 7 recs after disappearing. (I can access it through "My Posts." )

???

Nature Energy: An Estimate of the Death Toll Associated with a US Nuclear Power Phase Out.

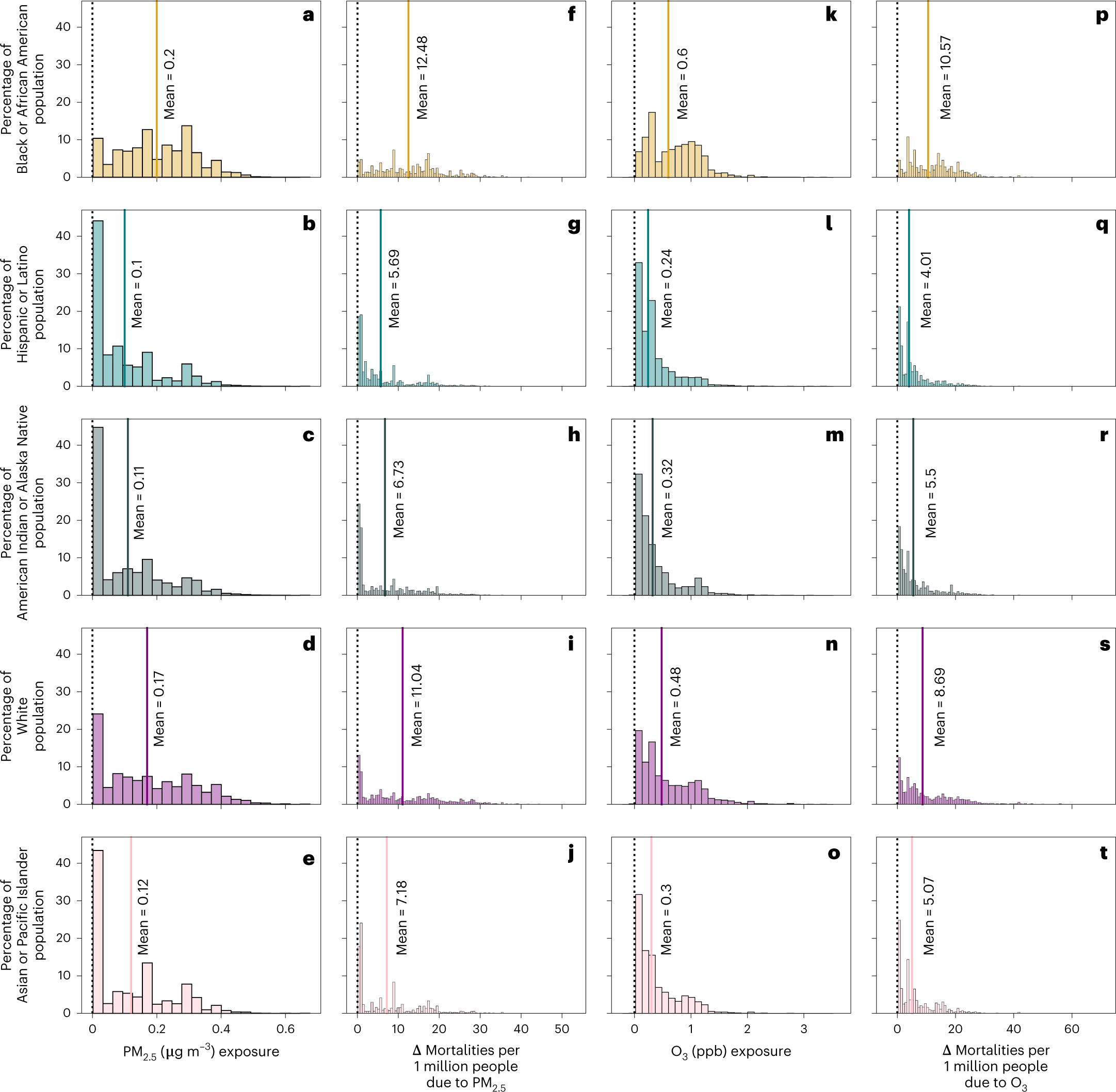

The paper to which I'll refer in this post is this one: Freese, L.M., Chossière, G.P., Eastham, S.D. et al. Nuclear power generation phase-outs redistribute US air quality and climate-related mortality risk. Nat Energy 8, 492–503 (2023).

I'm logged into my Nature account; apparently the paper is not open sourced.

Some excerpts:

Of course, there is in this paper lots of reference to so called "renewable energy" and the usual soothsaying that goes with it, although the reality, after the expenditure of a little over 4 trillion dollars in this scam in the period between 2015 and 2023, it has done essentially nothing more other than to accelerate the rate of climate change. After nearly half a century of such soothsaying there is little reason to expect the result will be any different.

The article discusses this thing called "reality:"

These recent shut-downs include the Indian Point Energy Center second reactor, which was shut down in April 2021 because of environmental and safety concerns due to its proximity to New York City11. Browns Ferry and Sequoyah nuclear power plant shut-downs in 1985 led to increased coal use12, as determined by regressions comparing power plant level production in the Tennessee Valley Area before and after the nuclear plant closures. Using similar regressions to assess generation by plants before and after the San Onofre Nuclear Plant (California) shut-down in 2012, ref. 13 found nuclear power plant closure led to increased gas use, as well as increased costs of electricity generation. Recent work has shown that phase-out of nuclear power from 2011 to 2017 in Germany led to replacement by fossil fuels14.

Many antinukes whine when I point out that they just don't give a rat's ass about climate change. The data referenced in the two paragraphs just posted makes this very clear. Even though they can't actually produce numbers that suggest that there is any form of energy with a risk as low as that of nuclear energy, they swear up and down they give a shit about climate change before launching into the usual selective attention balderdash about how "dangerous" nuclear power is. (Compared to what? Climate change?) They're lying.

The article continues:

Previous work has only addressed subnational-level response to nuclear power shut-downs or has quantified regional and globally averaged avoided mortalities from nuclear power use. Using the InMAP reduced form model, ref. 19 found that the shut-down of three nuclear power plants in the Pennsylvania–New Jersey–Maryland region led to increases in PM2.5 resulting in 126 additional mortalities. Another study5 quantified the global historical prevented mortalities and CO2 emissions due to historical and potential future nuclear power generation, using average mortality rates and CO2 emissions rates by electricity type. They project mortalities and CO2 emissions based on energy projections by the UN International Atomic Energy Agency out to 2050, finding that 4.39–7.04?million deaths would be prevented by using nuclear power, rather than fossil fuels, due to lower emissions of air pollutants. Previous work also has not consistently accounted for the potential growth of renewable energy, which has been shown to replace the use of fossil fuels20.

The study breaks down the ethnic distribution of people likely to be killed by nuclear shutdowns.

A figure from the paper:

Fig. 3: Distribution of exposure and mortalities by race and ethnicity for each county in no nuclear.

?as=webp

?as=webp

The caption:

More text:

I was banned some years back at another website, one nowhere near as good as DU, for making the true statement that opposing nuclear energy kills people, referring to James Hansen's seminal 2013 paper stating as much:

Prevented Mortality and Greenhouse Gas Emissions from Historical and Projected Nuclear Power (Pushker A. Kharecha* and James E. Hansen Environ. Sci. Technol., 2013, 47 (9), pp 4889–4895)

Truth has a way of producing scorn. So be it.

The full paper can be accessed at the link in a good University library or by a Nature+ subscription. The authors are from MIT.

Have a nice evening.

Profile Information

Gender: MaleCurrent location: New Jersey

Member since: 2002

Number of posts: 33,512